Enhancing Software Testing with AI

As software applications become increasingly complex, the need for efficient and effective testing methods is more critical than ever. Artificial Intelligence (AI) offers transformative capabilities that enhance automation, predictive analysis, and intelligent error detection, making it an invaluable tool in modern software testing frameworks.

Identifying Test Cases Suitable for AI Integration

The first step in leveraging AI in software testing is to identify test cases that are suitable for AI integration. Not all test cases benefit equally from AI; hence, selecting the right candidates is crucial. Key considerations include:

- Repetitiveness: Tests that are repetitive and time-consuming are prime candidates for automation using AI.

- Complexity: Complex scenarios where traditional automation struggles can benefit from AI’s ability to learn and adapt.

- Data-Driven: Tests requiring extensive data analysis can utilize AI's capabilities to recognize patterns and anomalies.

By carefully selecting test cases based on these criteria, organizations can optimize the impact of AI on their testing efforts.

Selecting Appropriate Tools for AI-Enhanced Testing

Once suitable test cases are identified, the next step is to select appropriate tools that facilitate AI integration. Several tools in the market cater to different aspects of AI-enhanced testing:

- Selenium with AI Plugins: Traditional automation tools like Selenium can be augmented with AI plugins to enable smarter decision-making and dynamic test adjustments.

- Testim.io: Utilizes machine learning to improve test stability and maintenance by automatically adapting to UI changes.

- Applitools: Offers visual AI testing tools that go beyond traditional functional testing by incorporating visual validations.

Selecting a tool often depends on the existing ecosystem, budget constraints, and specific testing needs of the organization.

Implementing Predictive Analysis in Testing

Predictive analysis is one of the most compelling applications of AI in software testing. It involves analyzing historical data to predict potential failures and focus testing efforts where they are needed most. Here's a basic workflow for implementing predictive analysis:

- Data Collection: Gather historical test results, defect logs, and performance metrics.

- Data Preprocessing: Cleanse and prepare data for analysis, ensuring consistency and accuracy.

- Model Training: Use machine learning algorithms to train models that can predict failure points or bottlenecks.

- Integration: Integrate predictive models into your CI/CD pipeline to provide real-time insights during testing cycles.

This approach enables testers to anticipate defects early in the development lifecycle, improving overall software quality and reducing time-to-market.

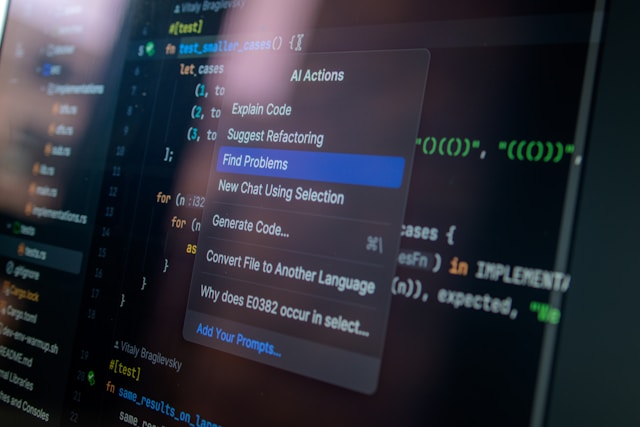

Intelligent Error Detection with AI

Error detection is a core component of software testing. By integrating AI, testers can achieve more intelligent error detection that goes beyond static rule-based checks:

- Anomaly Detection: AI can identify unusual patterns in system behavior that might indicate underlying issues.

- Error Classification: Classifying errors using machine learning models helps prioritize bug fixes based on severity and impact.

The ability of AI to adapt and learn from past data significantly enhances the accuracy of error detection, reducing false positives and negatives.

A Practical Framework for Integrating AI into Software Testing

To successfully integrate AI into your software testing framework, consider the following practical steps:

Step 1: Assessment and Planning

Evaluate current testing processes to identify areas that will benefit most from AI enhancements. Develop a clear plan outlining goals, required resources, and timelines.

Step 2: Pilot Program

Start with a pilot program targeting a small set of high-impact test cases. This approach allows you to measure results and refine techniques before scaling up.

Step 3: Tool Selection and Training

Select appropriate AI tools based on the pilot outcomes. Ensure the team is adequately trained on both the tools and the principles of AI-enhanced testing.

Step 4: Continuous Monitoring and Optimization

Monitor performance metrics continuously and optimize models as needed. Feedback loops are essential for refining predictive accuracy and automation effectiveness.

Step 5: Full-Scale Implementation

Expand the use of AI across more test cases as confidence grows. Regularly revisit strategies to incorporate new advancements in AI technology.

This framework encourages a phased approach to integrating AI, minimizing risks while maximizing benefits. By following these steps, organizations can ensure a successful transition to AI-driven software testing.